LLM Experiments #1: Shorter Sentences

Note: Experimenting with quick writeups as I play around with LLMs using my homegrown agentic workflow system.

Today’s idea (and the workflow system itself) both came from a dream of building a “daily pulse” of the podcast news ecosystem. As part of that I’m exploring ways to concisely present podcast transcripts. In particular, I want to create summaries that preserve the voice of the speakers while still condensing the text.

A summary can be abstractive or extractive: abstractive summaries paraphrase the original text; extractive summaries “extract” by pruning — removing sentences, phrases, or words while preserving structure where possible (see this paper for a discussion).

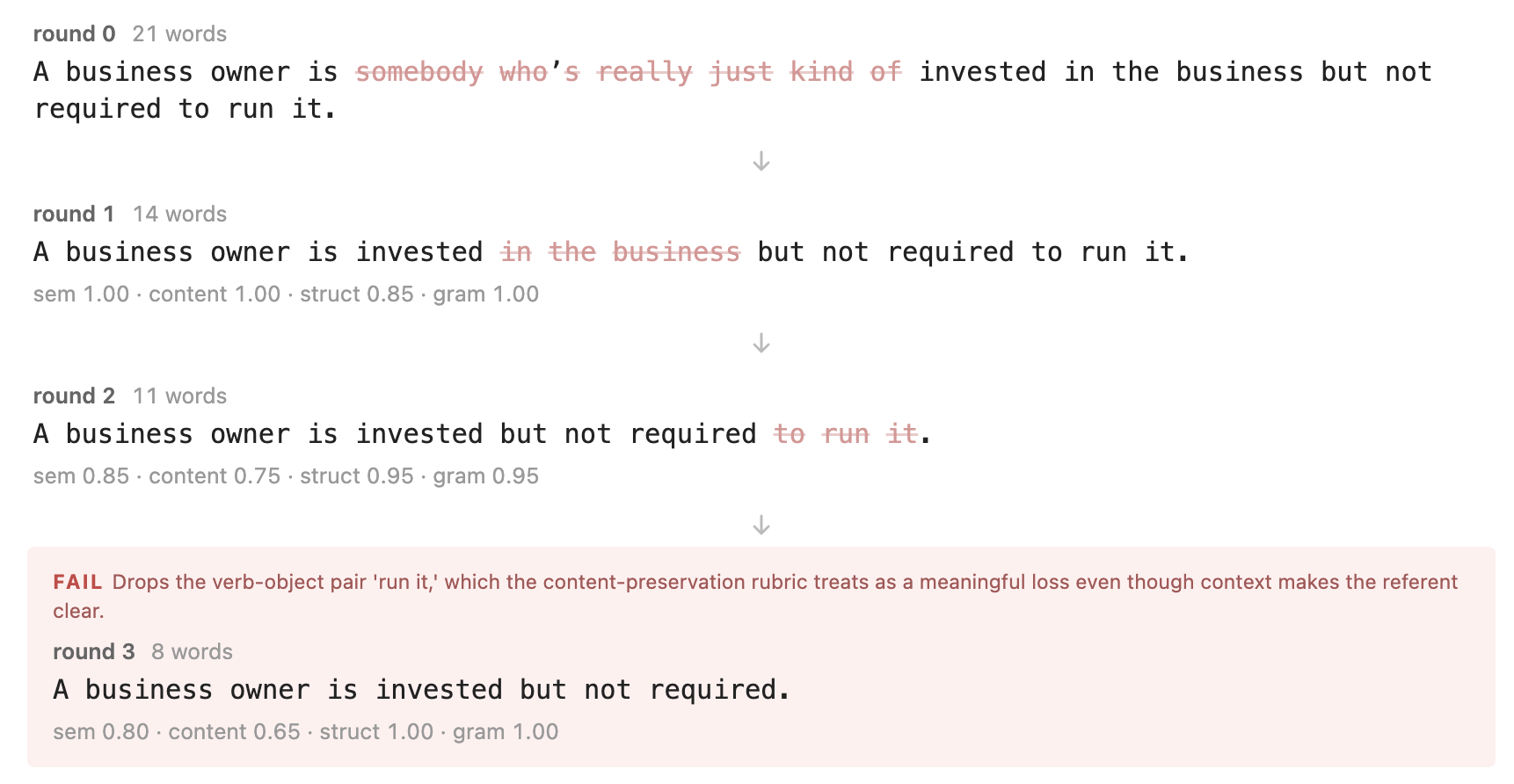

For my first attempt I tried a simple two-agent approach with a generator and a verifier. Given a sentence, the generator proposes multiple candidate simplifications, and then the verifier accepts the best one, or rejects them all. This proceeds iteratively until there is no simpler acceptable candidate.

Here’s the first try on a sentence from this podcast episode:

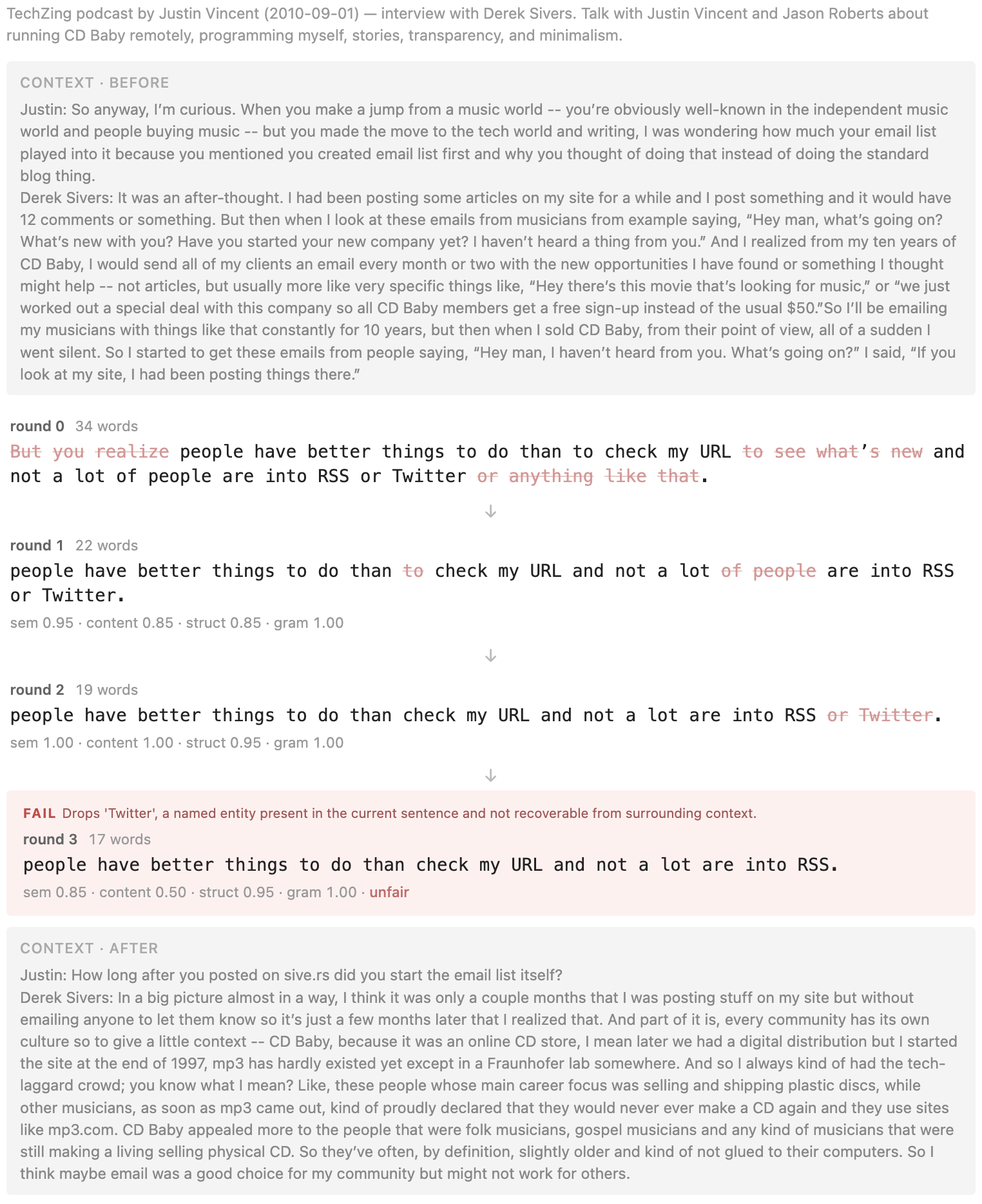

What’s worth keeping depends on context, so I provide the LLM a conversation snippet to help it judge what’s semantically meaningful:

In its current form the algorithm is a bit overeager and can sometimes lop off semantically significant phrases, so I’m already re-running with adjustments based on this initial result, tweaking the prompts and experimenting with how to provide guidance to the model so it can more accurately tell which parts of the sentence are most important.

I like this idea – it’s an antidote to the tendency of generative AI systems to increase the separation between raw materials and the final result. Instead, we can summarize people in their own words.